OpenClaw Architecture - Part 2: Concurrency, Isolation, and the Invariants That Keep Agents Sane

Why agents feel “haunted”… and why it’s really just concurrency + isolation + invariants

In Part 1, the spooky moment was the “3:00 AM phone call” question:

Why did my assistant do something while I was sleeping?

Part 2 is the scarier follow-up:

What stops it from doing two things at once and corrupting its own state?

Because the moment you have:

multiple chat surfaces (WhatsApp + Slack + WebChat),

time-based triggers (heartbeats, cron),

external triggers (webhooks),

and long-running tool chains (browser + shell + file writes),

…you’ve built a concurrency system, whether you meant to or not.

The moment I saw the session-lane design, I stopped thinking of “agent magic” and started thinking in terms of state machine guarantees.

What I like about OpenClaw is that it doesn’t “solve autonomy” with clever prompting.

It makes autonomy legible by enforcing a small set of invariants and building the queueing model around them.

The core idea is simple: serialize writes per session, throttle global work, and define exactly what happens when new input arrives mid-run.

1) Why agent systems need invariants (or they feel “haunted”)

If I’m reviewing an agent runtime, this is the part I always look for first:

What are the invariants and where are they enforced?

Because without invariants, agents don’t fail loudly.

They fail subtly.

A file gets half-written. A webhook replays after a reconnect. A second reply sneaks through.

And suddenly the system feels “haunted.”

Not because it’s intelligent.

Because you violated an invariant.

2) The invariant that matters most: the single-writer rule

Here’s the invariant that explains a lot of the stability:

Only one agent run should touch a given session at a time.

OpenClaw enforces this with a lane-aware FIFO queue:

Each session gets its own lane:

session:<key>That lane guarantees only one active run per session

And then each run also goes through a global lane (more on that next)

This is the part that stops the classic “two thoughts at once” failure modes:

tool calls interleave in the wrong order

two runs both mutate the same session transcript

you get duplicate sends or contradictory actions

the agent continues a plan that’s already obsolete

The unglamorous truth is: once tools and state are involved, coordination matters more than clever prompts.

3) The queue isn’t a detail, it’s the control mechanism

If you skim one section in the docs/codebase, don’t skim this one.

OpenClaw isn’t optimizing for “agent vibes.” It’s optimizing for boring system realities:

LLM runs are expensive

inputs can arrive back-to-back (message + webhook + heartbeat)

shared resources shouldn’t be contended (session files, logs, CLI stdin)

upstream rate limits shouldn’t get accidentally DDoS’d by your own agent

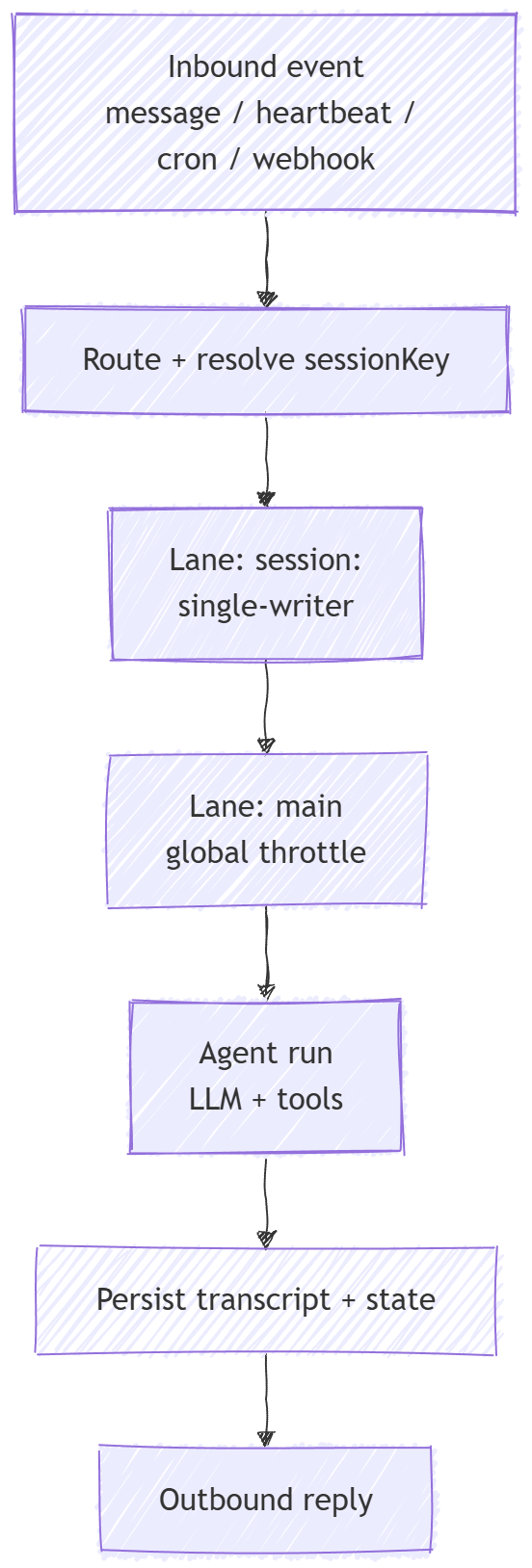

The two-stage model: per-session serialization + global throttling

OpenClaw’s queue does two things:

Per-session serialization

The runtime enqueues work by session key into a lanesession:<key>, which guarantees only one active run per session at a time.Global throttling

That session-scoped work then goes through a global lane (mainby default), so overall parallelism is capped byagents.defaults.maxConcurrent.

Two details that are easy to gloss over, but matter more than they look:

typing indicators can still fire immediately on enqueue (UX doesn’t “freeze” just because the run is waiting)

it’s a small in-process queue: no external workers, no background threads often described as “pure TypeScript + promises”

If you remember one picture from this post, make it this one:

4) Queue modes: determinism vs responsiveness (and why “steer” exists)

Now the real concurrency question:

What happens when a new message arrives while the agent is in mid-run?

OpenClaw doesn’t hide this behind vague “agent behavior.”

It exposes it as explicit policy: queue modes.

The modes are:

collect(default): coalesce queued messages into one follow-up turnfollowup: always wait until the current run endssteer: inject into the current run (at tool boundaries)steer-backlog: steer now and also preserve for follow-upinterrupt(legacy): abort active run, then run newest message

If you’ve ever seen an agent “spam replies” or ignore a correction mid-run, this is the knob that decides that behavior.

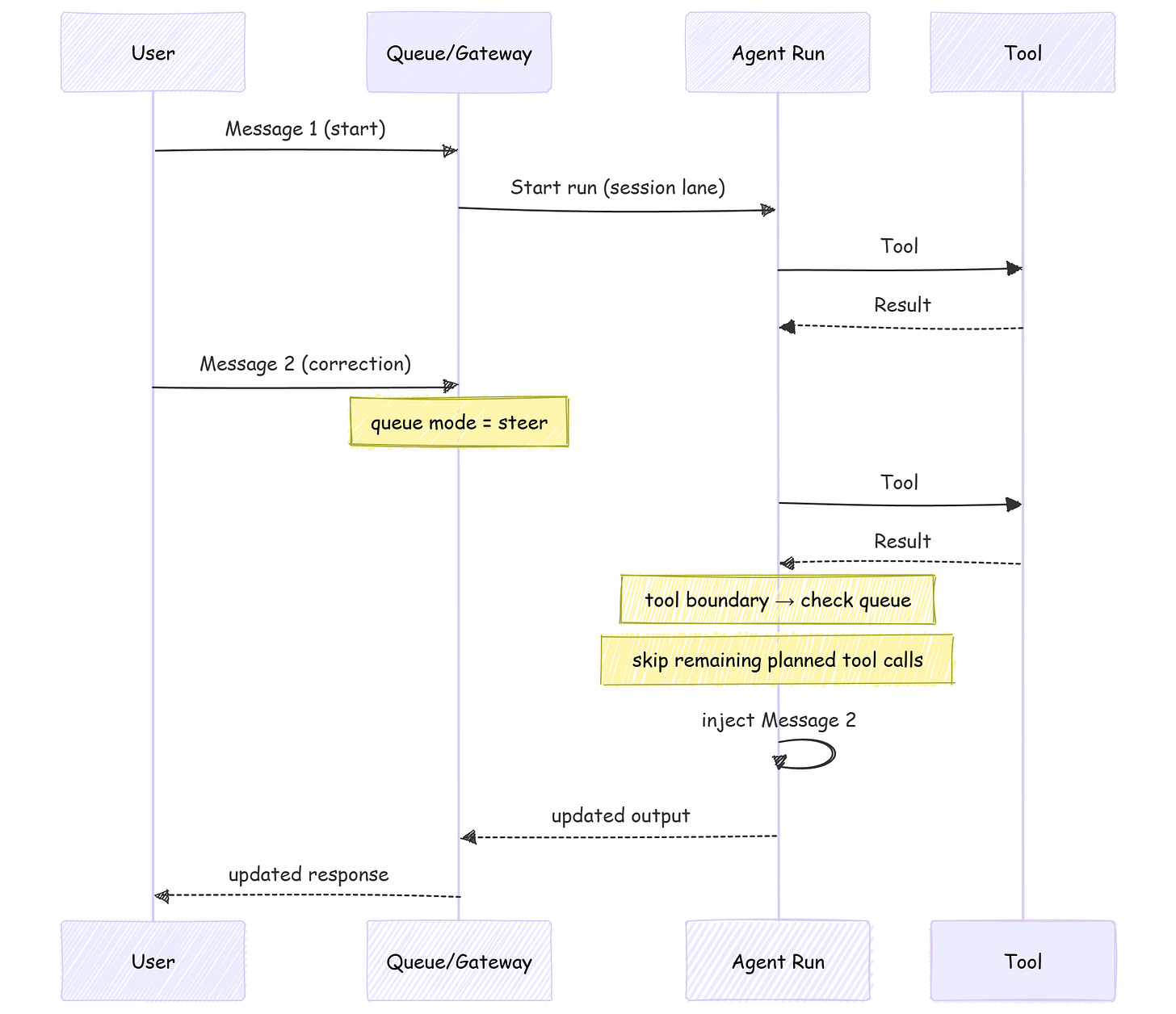

What “steer” actually means

This is the contract:

the queue is checked after each tool call

if a queued message exists, remaining tool calls from the current assistant message are skipped

then the queued user message is injected before the next assistant response

A simple failure case I couldn’t unsee:

A Slack correction arrives while a long browser + shell chain is mid-flight.

Without a policy, you either ignore the correction (bad UX) or you preempt inside a tool call (unsafe).steerpicks a third option: preempt at tool boundaries.

Here’s the mental model I used while reading the steering behavior:

(One nuance worth remembering: depending on streaming/surface behavior, steer can behave closer to a follow-up on some surfaces.)

5) Transport semantics: dedupe + debouncing (the plumbing you don’t get to ignore)

This is one of those “engineer gut check” sections.

If you don’t handle transport semantics, you’ll end up blaming the model for bugs you introduced in the plumbing.

5.1 Inbound dedupe

Real channels redeliver. Reconnects happen. Retries happen.

OpenClaw accounts for that with a short-lived dedupe cache keyed by things like channel/account/peer/session/message id; so duplicate deliveries don’t trigger another run.

5.2 Inbound debouncing

Humans type in bursts.

OpenClaw can batch rapid consecutive text messages into a single agent turn via messages.inbound.debounceMs (with per-channel overrides).

Two operational details that make it feel sane:

debounce applies to text-only messages; attachments flush immediately

control commands bypass debouncing so they remain standalone

6) Isolation: session keys are boundaries (and dmScope is a safety switch)

The queue prevents “two writers.”

Isolation prevents “wrong context.”

OpenClaw’s session model is explicit:

one direct-chat session per agent is treated as primary

direct chats collapse to

agent:<agentId>:<mainKey>(defaultmain)group/channel chats get their own keys

This gets security-relevant fast. If your agent can receive DMs from multiple people, default DM behavior can leak private context. The fix is “secure DM mode” via dmScope:

// ~/.openclaw/openclaw.json

{

session: {

dmScope: "per-channel-peer"

}

}

And importantly: the Gateway is the source of truth.

Clients shouldn’t “reconstruct” session state by parsing transcripts, clients query the Gateway for session lists and token counts.

That’s a control-plane choice, and it’s one of the reasons these invariants hold up across multiple UIs and devices.

7) Serialization is necessary, not sufficient

Serialization is necessary, not sufficient.

It keeps your session history sane.

It doesn’t automatically make your side-effects safe.

Even with perfect “single writer per session,” you can still have:

two different sessions mutating the same external resource

tool calls that aren’t idempotent (calling twice causes real damage)

long tool calls finishing with stale assumptions

This is why OpenClaw doesn’t stop at “one queue.” It also:

throttles global concurrency (global lane +

agents.defaults.maxConcurrent)uses/supports idempotency keys for side-effecting methods (e.g.,

send,agent) so retries can be deduped safely

And at the protocol layer, the Gateway keeps the control plane boring on purpose:

handshake is mandatory; the first frame must be

connectevents aren’t replayed; if you miss something, you refresh and recover

That’s the theme of this whole architecture: constraints first.

Not vibes. Not magic. Just guardrails that make “always-on” behavior legible.

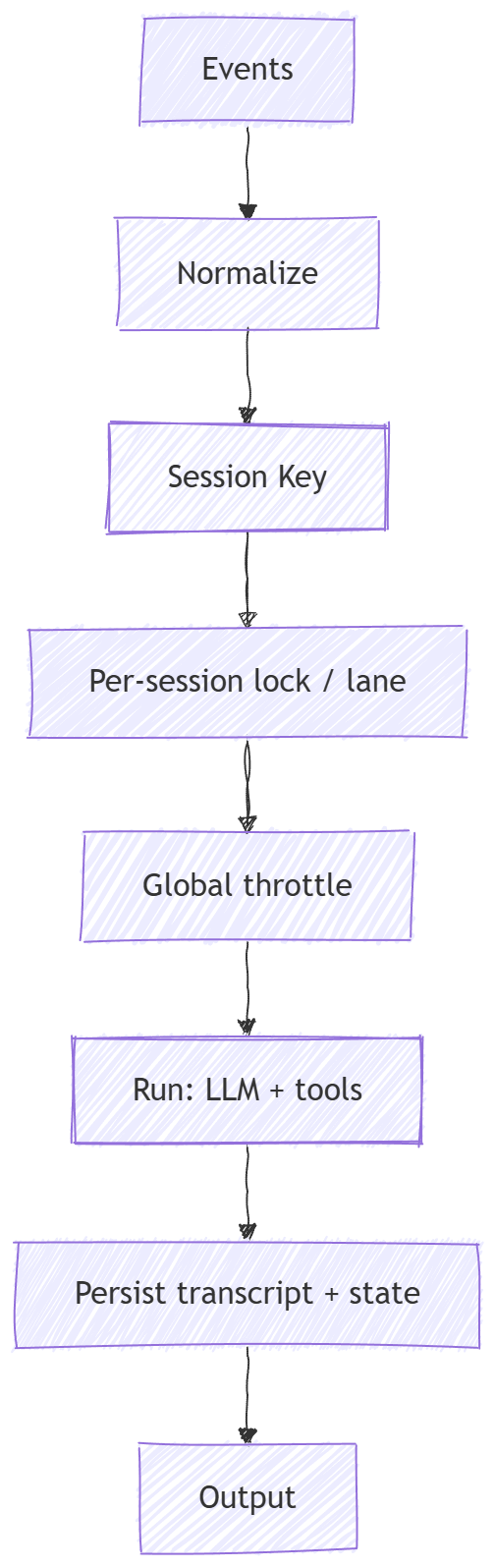

8) If you’re building your own: copy the invariants, not the hype

If you want the “feels alive” effect without shipping chaos, here’s the blueprint I’d steal:

Normalize all inputs into one event format

Resolve a session key (your isolation boundary)

Enforce single-writer per session

Add a global concurrency throttle

Decide how mid-flight messages interact (collect / followup / steer)

Add inbound dedupe + debounce (real channels redeliver, humans type in bursts)

Persist an append-only transcript so you can debug and audit what happened

Here’s the “invariants-first agent runtime” pattern:

The unglamorous truth: this layer, not the prompt, is what decides whether your agent is a toy or something you can actually leave running.

9) Go verify: the exact docs I leaned on

If you want to sanity-check any claims above, these are the pages I kept coming back to:

Session Management (

dmScope, secure DM mode, gateway as truth, JSONL locations)Gateway Architecture (connect-first, idempotency, events not replayed)

Next up in Part 3: memory/state ownership in practice and why “better context management” is mostly a systems problem, not a prompting trick.

This is one of the cleanest explanations of “haunted agent behavior” I’ve seen. The single-writer + global throttle framing is exactly the systems lens most people skip when they over-focus on prompting.

I also liked how you separate queue semantics from UX semantics (typing indicators, debounce, dedupe) — that distinction matters a lot in production when users think “the agent ignored me” but the runtime is actually preserving consistency.

Would love a follow-up with real incident traces (before/after) showing how collect vs steer changed outcomes in the same workflow.